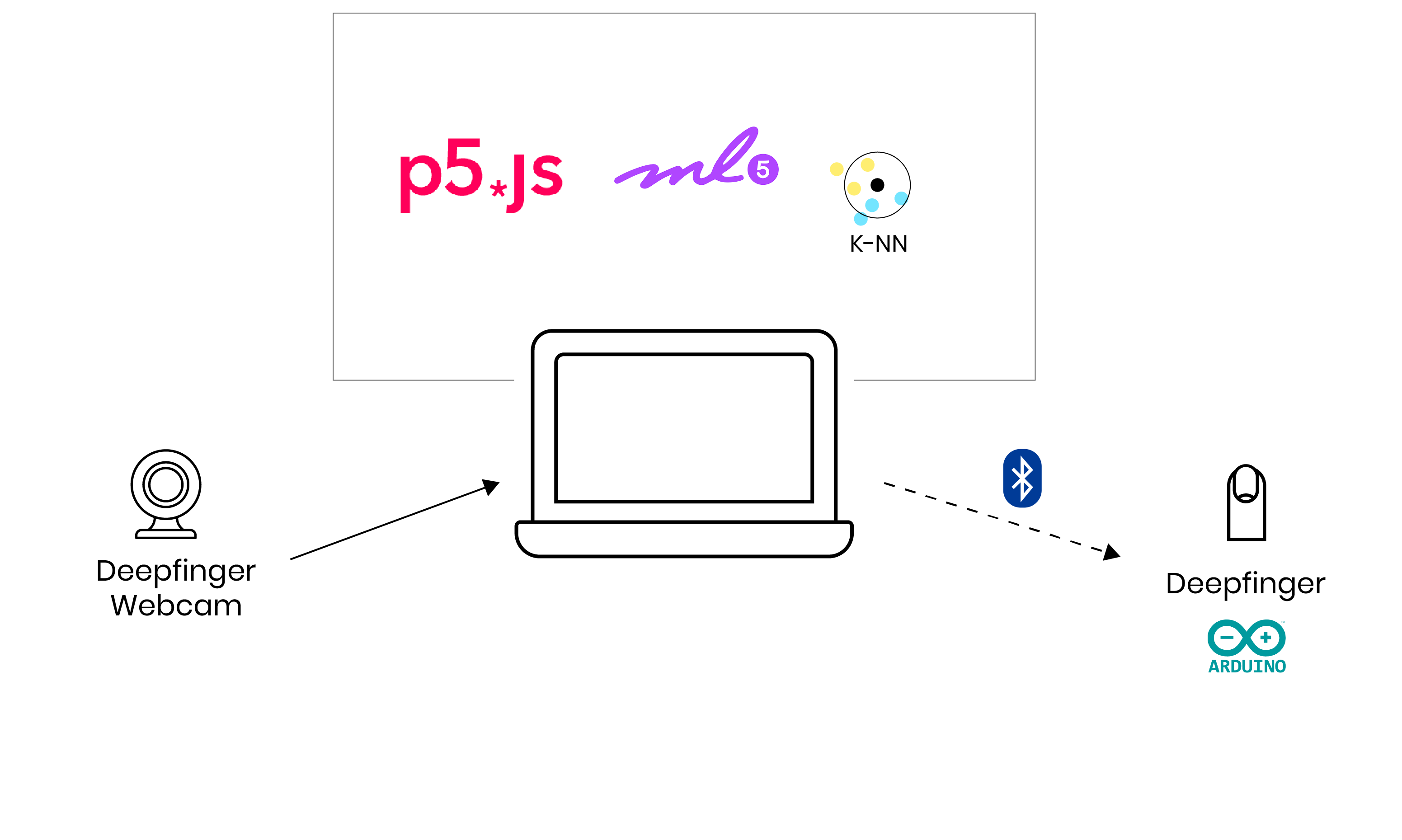

How does it work

On the laptop incoming webcam video is processedin P5js together with a machine learning library Ml5 to recognise poses. After that it applies the K-nearest Neighbour algorithm to classify different poses. Once the position to trigger the finger is seen a signal is send to the Arduino over bluetooth to move the finger.

A live demo for audience of the project. In this case the DeepFinger is used to control Netflix with a pose.

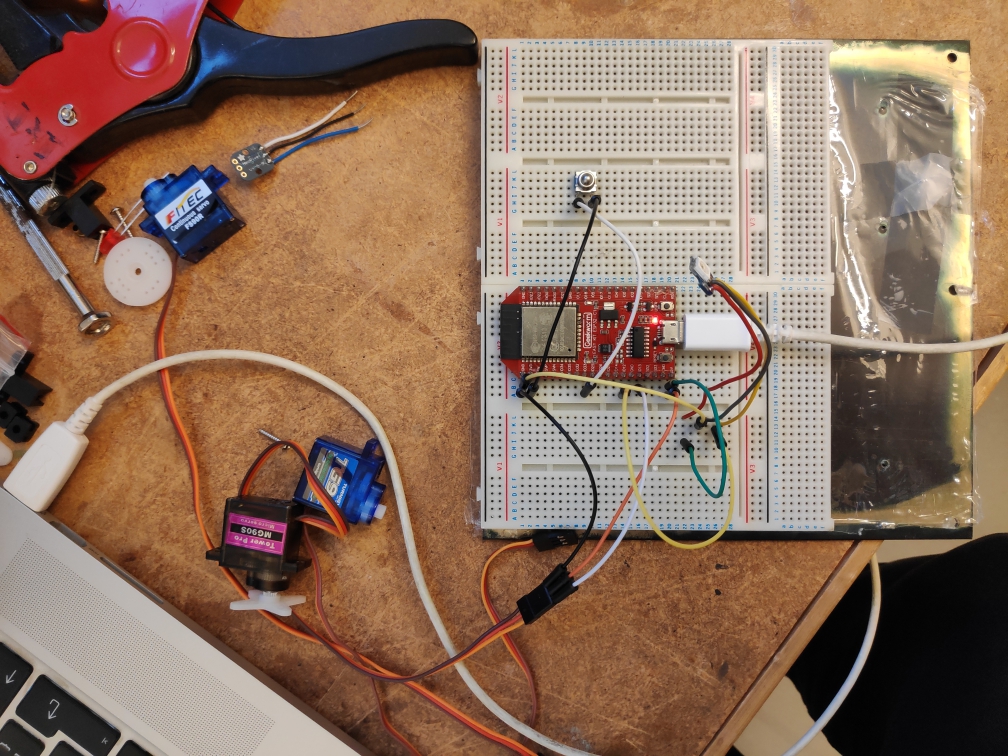

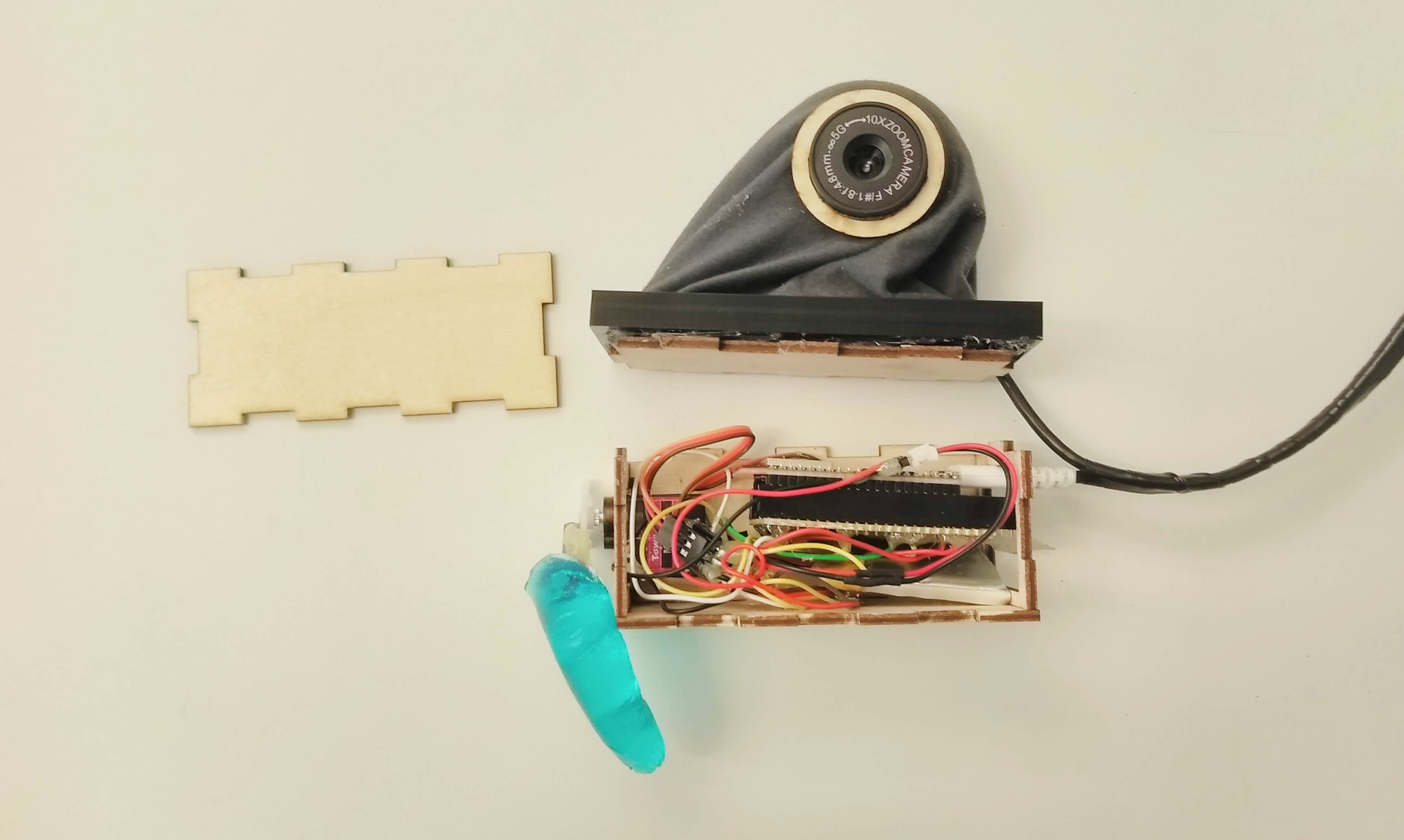

Process